From the Prove AI Team

Engineering depth, product thinking, and field notes from building the debugging layer for GenAI pipelines.

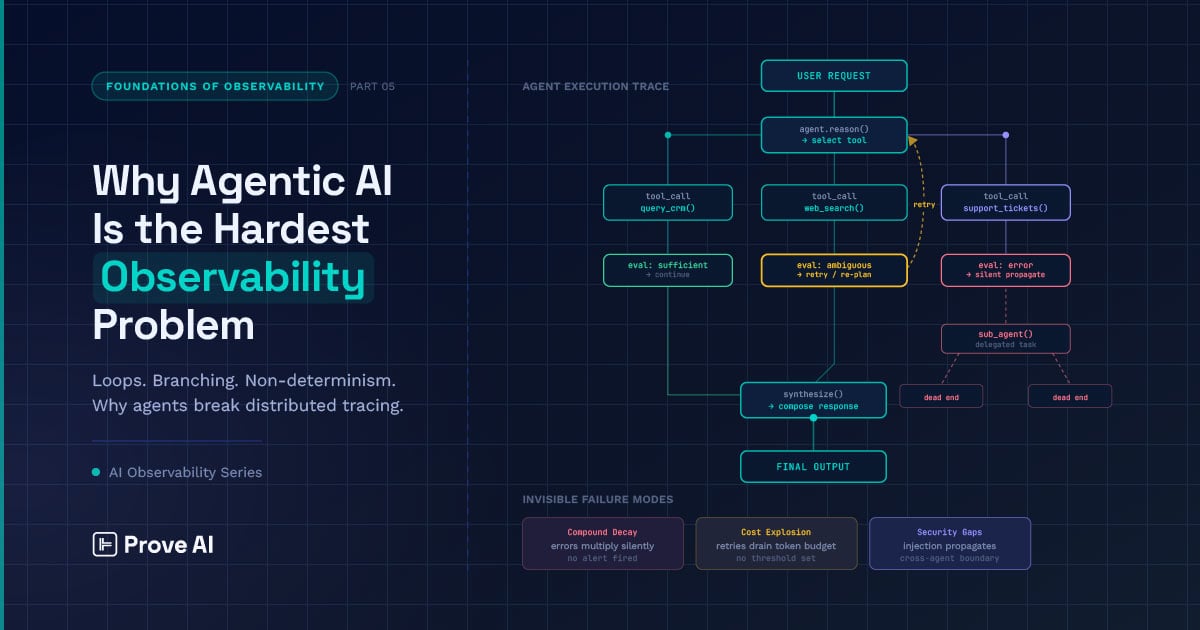

When agents in a multi-agent workflow quietly duplicate work or produce contradictory outputs — and nothing throws an exception — you’re paying the coordination tax.

Remediation is the logical next step after observability — surfacing not just what’s broken, but which issues to prioritize and how to fix them. The capstone of the Foundations series.

Tell me if this sounds familiar: you’ve set up a comprehensive suite of benchmarks, and your agent is sitting at a triumphal 95% accuracy on your eval set; then, you wire it into a ten-step workflow, only to find that your actual success rate in production is

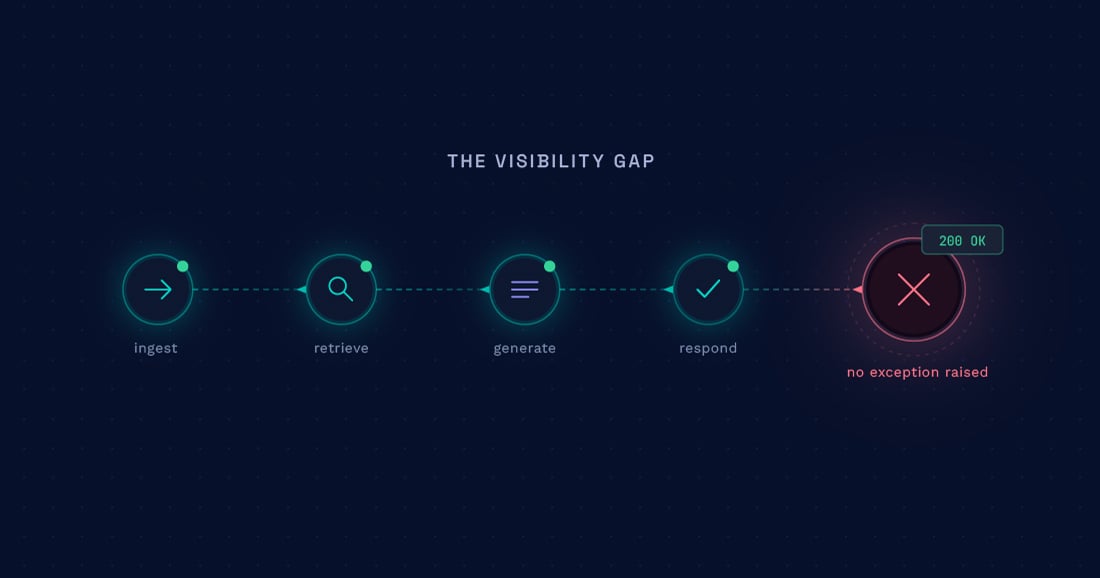

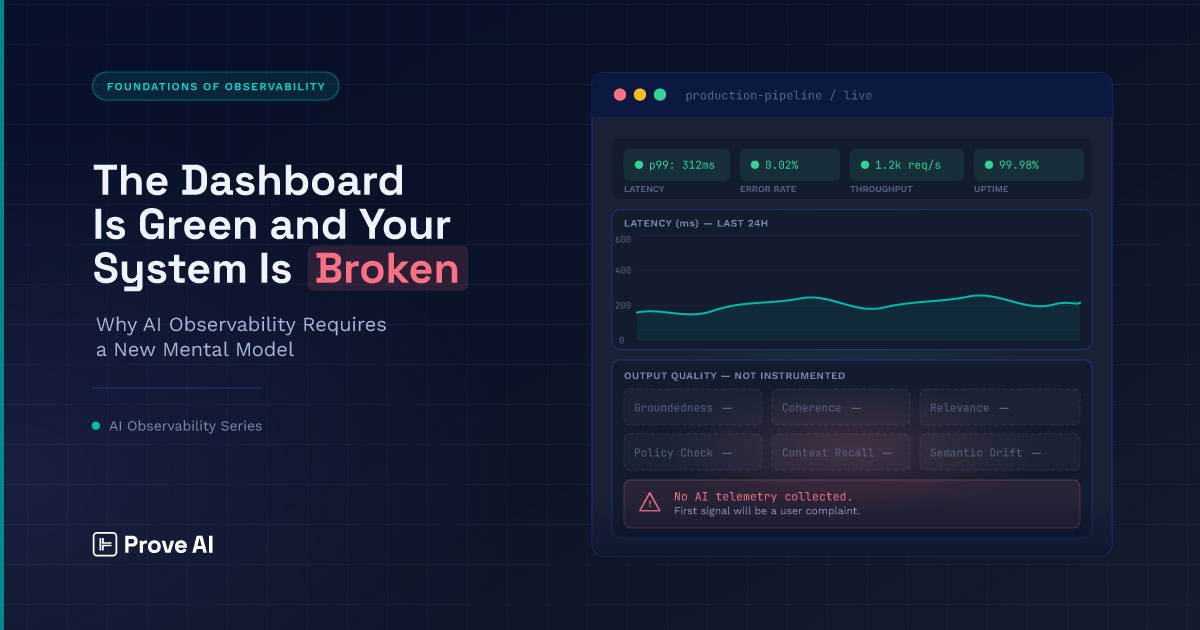

Conventional observability is very good at catching execution failure. Across exceptions, timeouts, non-2xx responses, queue backpressure, and memory pressure, most of the instrumentation stack is optimized for cases where one thing

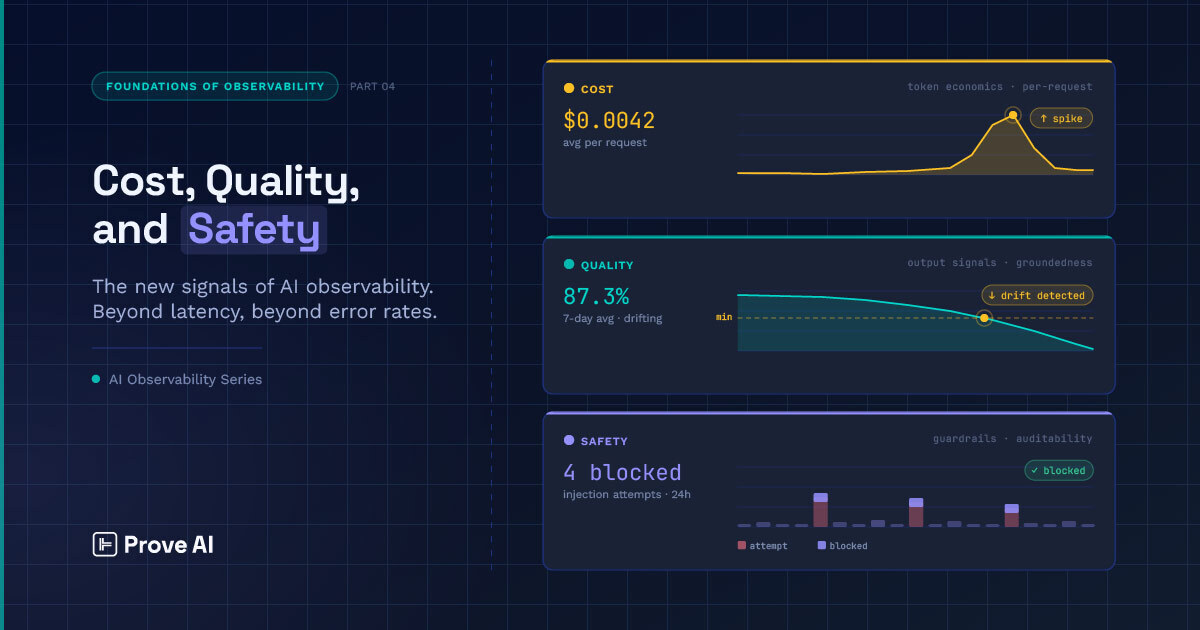

Foundations 4 closed on a provocation: the signals that matter for AI systems — quality, cost, and safety — extend observability into a measurement layer that distributed tracing alone cannot cover. But it also flagged a harder problem waiting

If you’ve instrumented an LLM system recently, you’re likely already aware of how much telemetry the observability stack can offer you: latency per completion call, token throughput, retrieval latency from your vector store, and error rates across

Discover why your AI project may only be 20% done and the critical steps to achieve production-grade systems.

Explore how Prove AI addresses the critical need for observability and governance in generative AI.

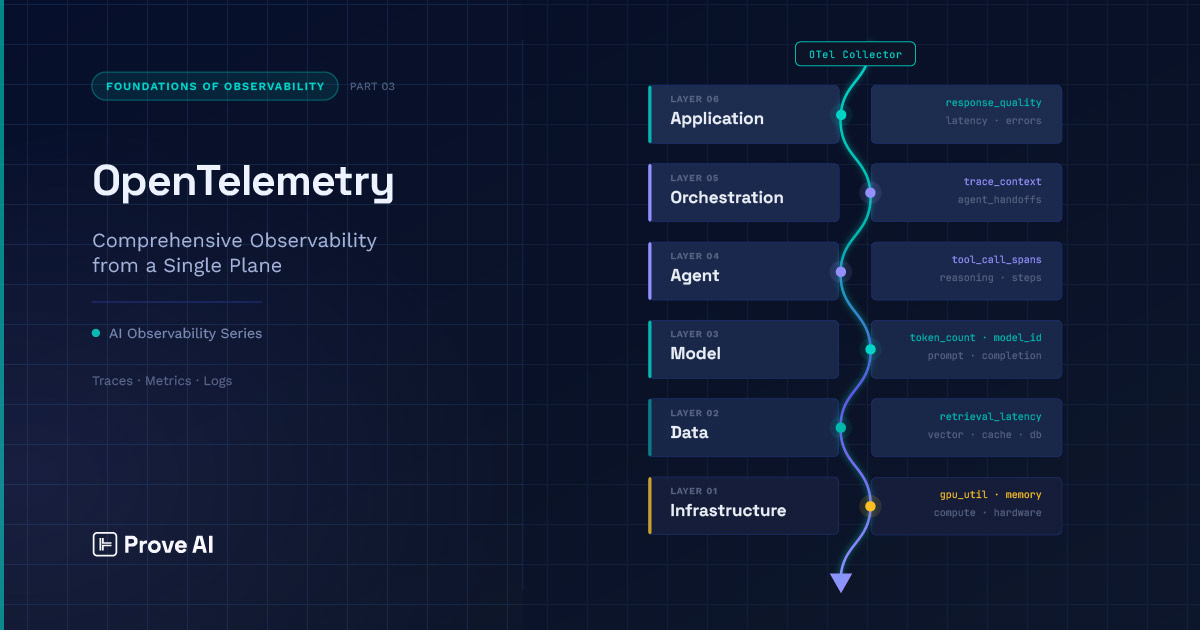

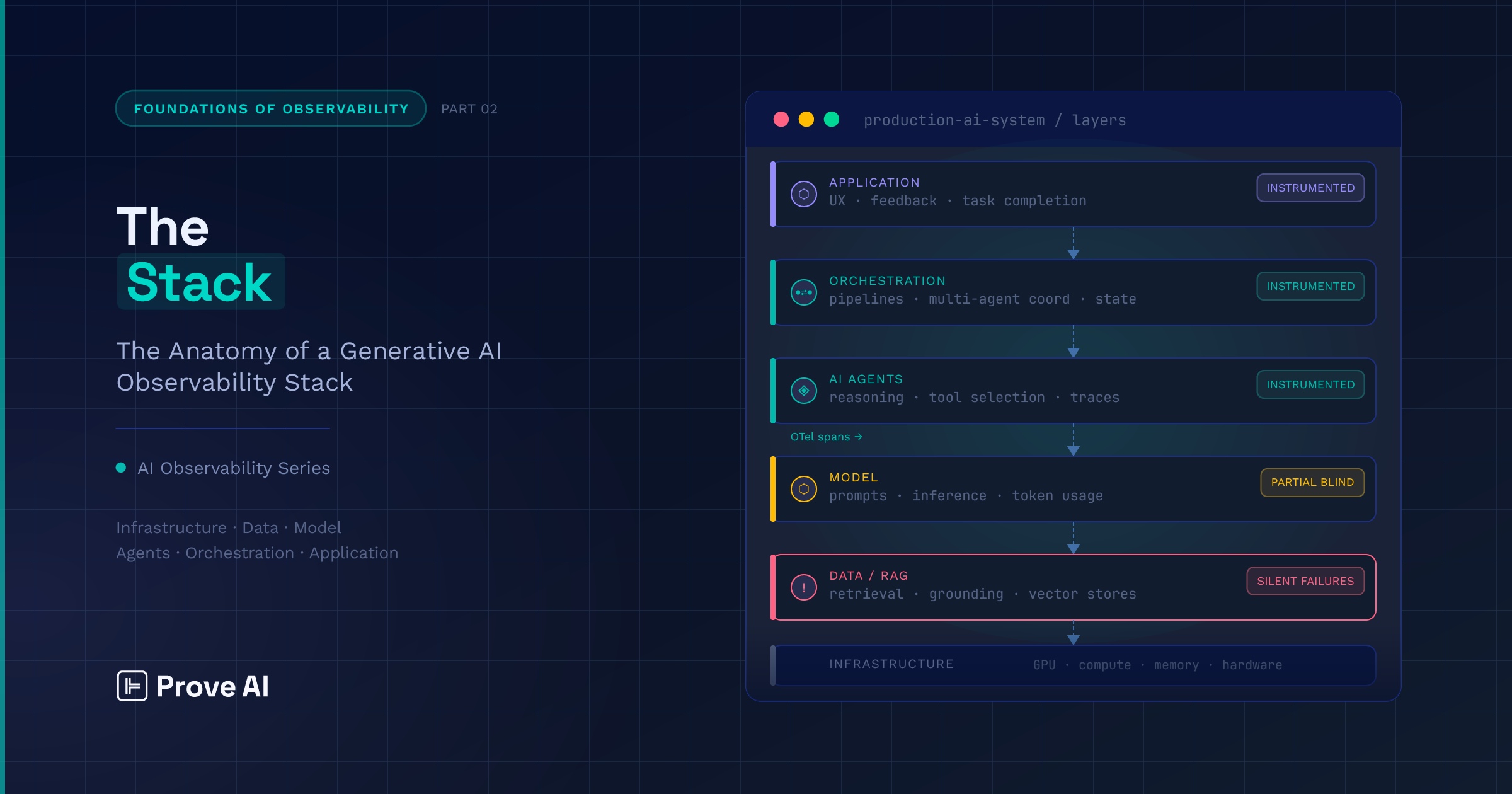

Part 2 of this series laid out the observability stack — six interdependent layers running from infrastructure through application, where failures at the bottom tend to surface at the top in forms that obscure their origin and derail investigations.

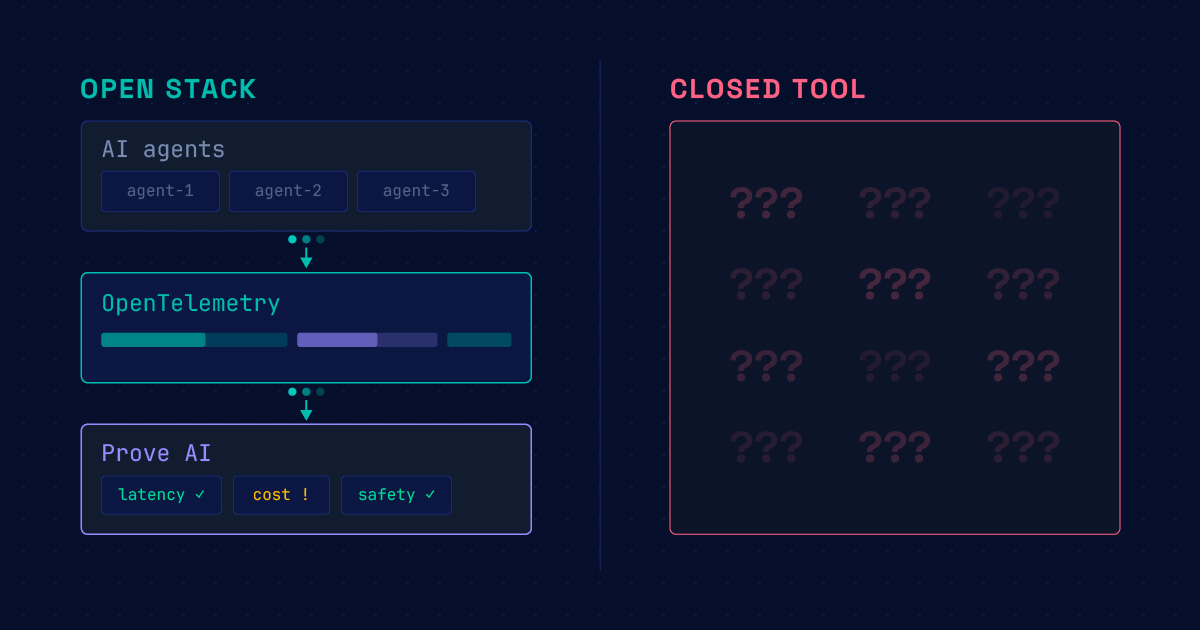

If Part 1 made the case for AI observability, Part 2 provides a blueprint for building the foundation for end-to-end visibility across your AI ecosystem. Before you can decide what to instrument, what guardrails you need, how best to detect anomalies or

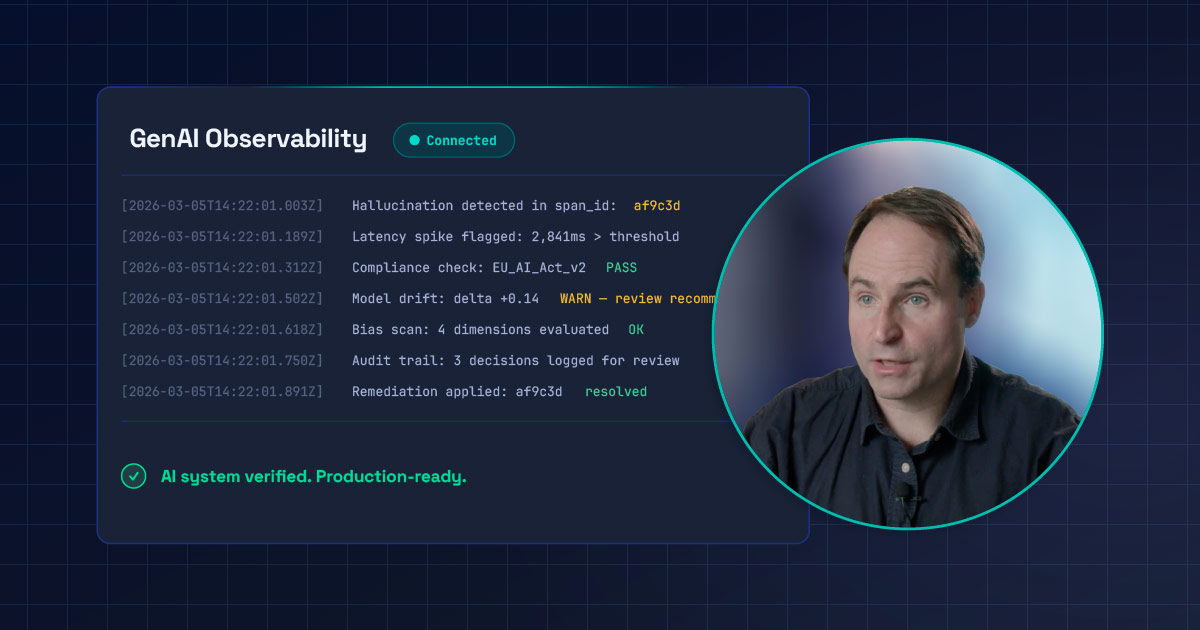

Navigating AI governance and fixing telemetry issues with Prove AI CTO Greg Whalen.

AI observability needs a new approach to detect and diagnose issues that traditional monitoring misses.

As CTO of Prove AI, Greg Whalen operates at the sharp edge of one of technology’s most urgent challenges: transforming generative AI from impressive prototype to production-grade, trusted enterprise system.

Greg Whalen argues enterprises must prioritize observability and governance to turn generative AI prototypes into production-grade systems.

Prove AI enhances generative AI observability with containerized deployments and AI-guided remediation.

Discover how traditional observability frameworks fail in genAI telemetry and the need for tailored solutions.

The painful debugging cycles we see today stem not from bad models, but from incomplete, mutable, or poorly ordered system data.

As AI crosses from novelty to expectation, CTOs must shift focus from experimentation to building durable, scalable foundations.

AI systems need observability tools that move beyond monitoring to enable action.